I recently came across a really important paper that might have flown under the radar for many people. For this reason, I’m highlighting it here and will soon write up a F1000 Recommendation. This is news that needs to be heard, understood and appreciated by conservation scientists and environmental policy makers everywhere.

I recently came across a really important paper that might have flown under the radar for many people. For this reason, I’m highlighting it here and will soon write up a F1000 Recommendation. This is news that needs to be heard, understood and appreciated by conservation scientists and environmental policy makers everywhere.

Sean Sloan and colleagues (including conservation guru, Bill Laurance) have just published a paper entitled Remaining natural vegetation in the global biodiversity hotspots in Biological Conservation, and it we are presented with some rather depressing and utterly sobering data.

Unless you’ve been living under a rock for the past 20 years, you’ll have at least heard of the global Biodiversity Hotspots (you can even download GIS layers for them here). From an initial 10, to 25, they increased penultimately to 34; most recently with the addition of the Forests of East Australia, we now have 35 Biodiversity Hotspots across the globe. The idea behind these is to focus conservation attention, investment and intervention in the areas with the most unique species assemblages that are simultaneously experiencing the most human-caused disturbances.

Indeed, today’s 35 Biodiversity Hotspots include 77 % of all mammal, bird, reptile and amphibian species (holy shit!). They also harbour about half of all plant species, and 42 % of endemic (not found anywhere else) terrestrial vertebrates. They also have the dubious honour of hosting 75 % of all endangered terrestrial vertebrates (holy, holy shit!). Interestingly, it’s not just amazing biological diversity that typifies the Hotspots – human cultural diversity is also high within them, with about half of the world’s indigenous languages found therein.

Of course, to qualify as a Biodiversity Hotspot, an area needs to be under threat – and under threat they area. There are now over 2 billion people living within Biodiversity Hotspots, so it comes as no surprise that about 85 % of their area is modified by humans in some way.

A key component of the original delimitation of the Hotspots was the amount of ‘natural intact vegetation’ (mainly undisturbed by humans) within an area. While revolutionary 30 years ago, these estimates were based to a large extent on expert opinions, undocumented assessments and poor satellite data. Other independent estimates have been applied to the Hotspots to estimate their natural intact vegetation, but these have rarely been made specifically for Hotspots, and they have tended to discount non-forest or open-forest vegetation formations (e.g., savannas & shrublands).

So with horribly out-of-date vegetation assessments fraught with error and uncertainty, Sloan and colleagues set out to estimate what’s really going on vegetation-wise in the world’s 35 Biodiversity Hotspots. What they found is frightening, to say the least.

Read the rest of this entry »

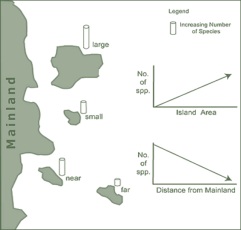

I’ve just read an elegant little study that has identified the main determinants of differences in the slope of species-area curves and species-accumulation curves.

I’ve just read an elegant little study that has identified the main determinants of differences in the slope of species-area curves and species-accumulation curves.