I used to think it was merely a post-COVID19 hiccough, but the extensive delays in receiving reviews for submitted manuscripts that I am seeing near constantly now are the symptoms of a much larger problem. That problem is, in a nutshell, how awfully journals are treating both authors and reviewers these days.

I regularly hear stories from editors handling my papers, as well as accounts from colleagues, about the ridiculous number of review requests they send with no response. It isn’t uncommon to hear that editors ask more than 50 people for a review (yes, you read that correctly), to no avail. Even when the submitting authors provide a list of potential reviewers, it doesn’t seem to help.

The ensuing delays in time to publication are really starting to hurt people, and the most common victims are early career researchers needing to build up their publication track records to secure grants and jobs. And the underhanded, dickhead tactic to reset the submission clock by calling a ‘major review’ a ‘rejection with opportunity to resubmit’ doesn’t fucking fool anyone. The ‘average time from submission to publication’ claimed by most journals is a boldface lie because of their surreptitious manipulation of handling statistics.

The most obese pachyderm in the room is, of course, the extortionary prices (and it is nothing short of extortion) charged for publishing in most academic journals these days. For example, I had to spend more than AU$17,000.00 to publish a single open-access paper in Nature Geoscience last year. That was just for one paper. Never again.

Anyone with even a vestigial understanding of economics feels utterly exploited when asked to review a paper for nothing. As far as I am aware, there isn’t a reputable journal out there that pays for peer reviews. As a whole, academics are up-to-fucking-here with this arrangement, so it should come as no surprise that editors are struggling to find reviewers.

Read the rest of this entry »

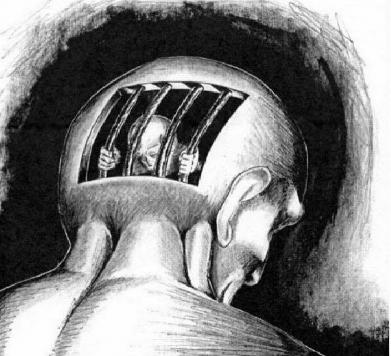

I contend that publishing articles in nearly all peer-reviewed journals amounts to a form of

I contend that publishing articles in nearly all peer-reviewed journals amounts to a form of

A take on a small section of my recent book,

A take on a small section of my recent book,  This is probably more of an act of self-therapy on a Friday afternoon to alleviate some frustration, but it is an important issue all the same.

This is probably more of an act of self-therapy on a Friday afternoon to alleviate some frustration, but it is an important issue all the same.